The screenshot was beautiful. Forty-seven funnels, each one polished and branded, built in under five minutes using AI. The guy who posted it was thrilled—screenshots and all, announcing his productivity to anyone who’d listen.

My first thought: this man just created 47 new ways to lose money.

Because he couldn’t make one funnel work. Not one. And now he had 47 of them, each as hollow as the last—just shinier and built faster.

I see this pattern every week. In audits, on calls, in the dashboards clients show me when they’re trying to figure out why nothing’s moving. He’s not an outlier. He’s a preview of where most businesses are headed.

Speed is the metric. Results are not.

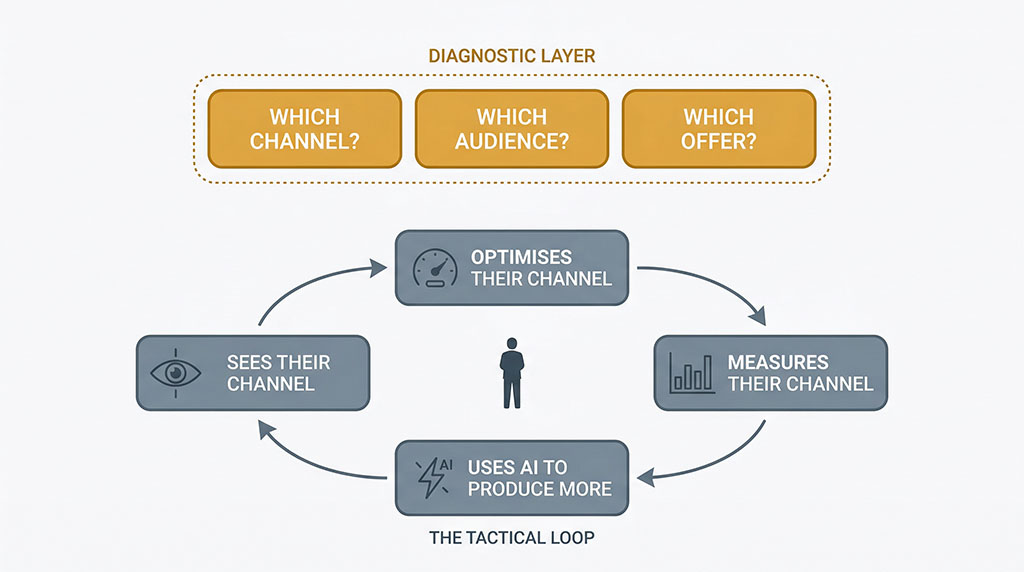

The dominant AI narrative right now is simple: more, faster. Make more content. Build more campaigns. Launch more ads. Test more variations. The tool is so powerful that the sheer volume of output will produce results. Throw enough at the wall and something sticks.

You’ve seen the YouTube thumbnails. “I built 4 billion AI agents.” “I created a month of content in 20 minutes.” The metric everyone celebrates is speed—how fast you went from nothing to something. And the implicit promise beneath all of it is seductive: if you can just produce enough stuff, fast enough, the results will follow.

It’s a compelling story. It’s also responsible for a staggering amount of wasted money.

MIT researchers studied over 300 generative AI deployments backed by $30–40 billion in enterprise investment. Ninety-five percent delivered zero measurable impact on profit and loss. And the researchers were clear about why: the failures weren’t caused by flawed AI models. They were caused by misaligned priorities—companies pointing powerful tools at the wrong problems.

That finding should sound familiar. If the tools didn’t work, nobody would get results. But some people do. The difference is what they pointed the tool at before they hit “go.”

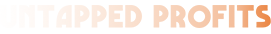

Specialists scale their tunnel vision

Here’s what I see playing out across almost every business I audit. A Facebook Ads specialist gets access to AI and uses it to build more Facebook Ads. A content marketer uses it to write more blog posts. An email team uses it to generate more subject lines. Everyone gets dramatically faster at the thing they already know how to do.

Nobody stops to ask whether that thing is the right thing.

This isn’t a character flaw. It’s a structural feature of specialisation. When your expertise is a hammer, AI turns it into a nail gun. You’re still hitting nails. You’re just hitting them at extraordinary speed—even if what the project actually needed was a screwdriver.

The result is tunnel vision at scale. The Google Ads person optimises bids. The content team optimises publishing cadence. The email person optimises send times. Every individual channel improves in isolation while the overall direction goes unexamined.

And AI—brilliant, powerful, obliging AI—accelerates all of it without once asking: are you sure this is what you should be doing?

Because that’s not what anyone asks it to do.

The gap isn’t technical. It’s cognitive. The skill that’s missing isn’t prompting—anyone can prompt. It’s thinking. Knowing which question to ask before you start building. And that skill has nothing to do with which AI tool you’re using.

I had a client last quarter who’d given three separate agencies access to AI tools. The Google Ads agency was using AI to generate ad variations. The content agency was using AI to write blog posts. The email team was using AI to A/B test subject lines. Every single one of them was performing well within their own reporting dashboard.

Revenue was flat. When I pulled the data into one view, the picture was obvious: the Google Ads agency was bidding on keywords the content team was already ranking for organically. They were paying for traffic they were already getting for free. Nobody saw it because nobody was looking at both.

"But we test and iterate—that IS strategy"

This is where I expect some pushback. You might be thinking: I’m not the 47-funnel guy. I use AI to test, measure, and iterate. I’m finding the right direction through experimentation.

Fair objection. And it sounds right. But testing only works if your experiments cover the right territory.

Frank Kern—who’s been selling online for 26 years and runs OJOY.ai—tells a story that nails this. His software had a solid 58% trial-to-customer conversion rate. Good number. But too many new customers were cancelling in their first month, and he wanted to fix it.

So he did what any smart operator does when the numbers look wrong. Cleared the calendar. Dove in. He spent two solid months crunching data and testing hypotheses. Was the product attracting the wrong users? Was there a feature gap? Was the onboarding broken?

Two months of intense, focused investigation. Every hypothesis was wrong.

The real reason people cancelled? They were happy. They’d schedule a month’s worth of content, get exactly what they came for, and leave satisfied. The problem wasn’t dissatisfaction—it was that the product didn’t give them a reason to come back daily.

No amount of testing would have surfaced that answer—because every test assumed something was broken. He was testing for what’s wrong when the real question was what’s missing. The tool wasn’t the problem. The starting question was.

Here’s the part that stings: AI could have diagnosed this in hours. Feed it the usage data, the cancellation timing, the satisfaction signals, and ask an open-ended question—why are first-month customers leaving despite high satisfaction?—and the pattern would have been obvious.

But he didn’t use AI for the thinking. He used his own assumptions, and those assumptions kept him locked inside the wrong frame for eight weeks.

That’s the trap. “Test and iterate” feels like a direction. But if every iteration lives inside the same set of assumptions, you’re just the 47-funnel guy with better data hygiene.

AI is a thinking tool—not just a production line

This is the part most AI marketing content gets wrong. Not that AI is overhyped—it isn’t. AI is genuinely extraordinary. The mistake is treating it as a production-only tool when it’s equally powerful for diagnosis.

I run into this constantly. Business owners show me their AI-generated content calendars, their automated email sequences, their ad variations—all built at speed. Then I ask what data they fed AI before they started building. The answer is almost always nothing. They skipped the thinking and went straight to the making.

Consider what happens when you use AI to diagnose before you produce.

Kern—after wasting two months on the wrong question—eventually identified his three highest-value activities across 26 years of selling online. Just three: creating valuable content that drives email signups, sending deadline-based offers, and running live workshops. That’s it. Twenty-six years of online business, and the overwhelming majority of revenue traced back to those three things. Everything else was noise.

He could have found this pattern in an afternoon. Export 26 years of sales data. Export every campaign, every email, every launch. Ask AI: which activities correlate most strongly with revenue? The answer was sitting in the data the entire time. He just never thought to ask.

Or take another finding from his business: his YouTube videos with the highest view counts had zero correlation with customer acquisition. The videos that actually drove sales? Often around 900 views.

Because view count measures reach. View duration—how long people actually watched relative to the video’s length—measures value. And the people who watched all the way through a 900-view video were exactly the right buyers.

That insight doesn’t come from producing more videos faster. It comes from asking AI a diagnostic question: which of my videos actually lead to customers, and what do those videos have in common?

The pattern is always the same. Use AI to find the right direction first. Then use AI to scale in that direction.

Build one funnel that works. Then build the next one. Not 47 at once.

If you want to try this right now, here’s where to start. Take your last 12 months of sales data—every campaign, every channel, every revenue source—paste it into AI and ask: which three activities generated 80% of this revenue? You’ll probably be surprised by the answer. And you’ll definitely stop building things you don’t need.

When speed IS the answer

To be fair: once you’ve found the right direction, speed matters enormously.

Kern built a single book funnel in 2012. One funnel—well-constructed, aligned to what actually worked. It ran profitably for twelve years straight, all the way through to late 2024. One funnel. Twelve years.

And many of the customers it generated eventually became users of his current software.

That’s where AI-powered speed becomes valuable. Not in building 47 untested funnels, but in building the second thing after the first thing proves it works. Make one asset excellent. Measure whether it actually performs. Then use AI to create another one—a different angle, a different format—and measure that too.

It’s not about producing more. It’s about producing more of what works, after you’ve confirmed it works.

The formula is boring. That’s probably why nobody posts screenshots of it.

One funnel, one question

Those 47 funnels are still out there somewhere, I assume. Beautiful. Polished. Completely inert.

But picture this instead. The same person. The same AI. The same five minutes. Except before he built anything, he asked a different kind of question. Not “build me 47 funnels” but “here’s my sales data, my customer feedback, my traffic sources—what should I build?”

One funnel. Pointed at the right audience, with the right offer, built on an insight that only his data could reveal.

That’s the shift. Not a new tool. Not a new prompt. A new first question.

The AI was never the problem. The 47 funnels were never the problem. The problem was what he asked for before he started building—which was nothing. He skipped the thinking and went straight to the making.

Most businesses are doing exactly this right now. I see it in every audit. The dashboards are full. The activity is impressive. And somewhere buried in the data they never fed to AI is the answer to why none of it is working.

The tool doesn’t care which way you point it. It’ll build 47 funnels or answer one diagnostic question with equal enthusiasm.

Choose the question.

See what one brain would change

Map your current agency setup against the integrated model—and find out where the gaps, overlaps, and wasted spend are hiding.

Most clients find 2–3 channels working against each other that nobody spotted because nobody could see both. Takes 30 minutes.

You don’t need a better agency. You need to stop being the one holding it all together.