Two people sit down at the same AI tool. Same screen. Same blinking cursor.

One types: “Write me a blog post about marketing.”

The other has spent three weeks studying Sean D’Souza’s narrative architecture, built a five-phase production system with structural audits and humanity checks, and mapped out exactly where a reader’s attention spikes and crashes across 2,000 words.

They both hit enter. One gets slop. The other gets something a professional editor would approve.

The difference isn’t talent. It’s not even experience—not in the traditional sense. It’s something Scott Adams never accounted for when he made skill stacking famous.

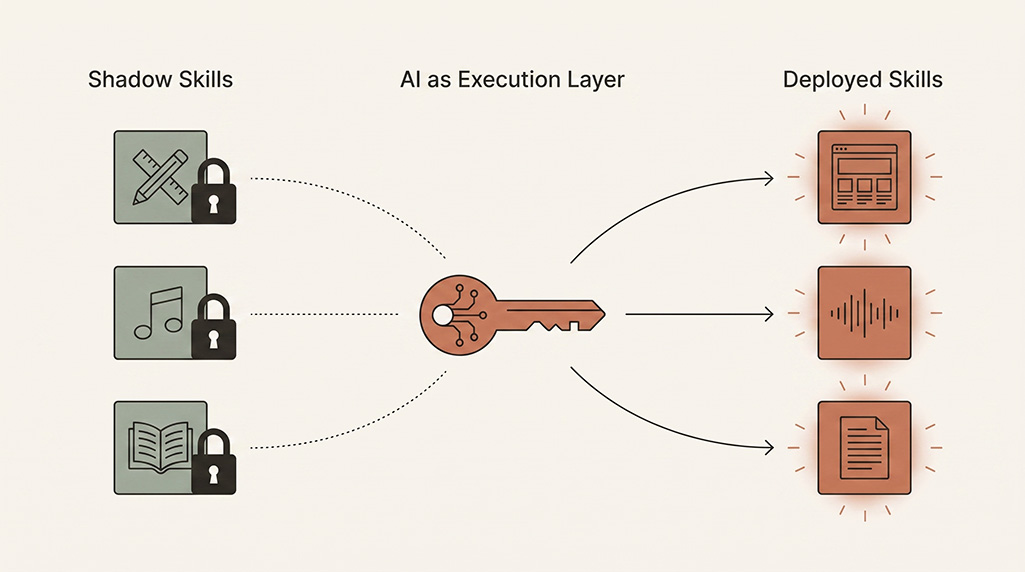

The original stack had a hidden assumption

Adams’ concept was elegant. In How to Fail at Almost Everything and Still Win Big, he laid out two paths to becoming extraordinary: be the absolute best at one thing (near-impossible), or reach the top 25% in two or more complementary skills and combine them. His own proof? Decent drawing, decent writing, ordinary business skills, and a good sense of humour stacked into Dilbert—a comic strip syndicated in 65 countries. As he put it, capitalism rewards things that are both rare and valuable.

The model worked because execution was the bottleneck. If you couldn’t physically do the work—write the code, design the layout, edit the video—the skill didn’t count. You could have the best eye for typography in the world, but if you couldn’t operate Illustrator, that eye stayed locked in your head. Like owning a boat with no ocean.

Adams assumed every skill in the stack needed a minimum threshold of execution ability. You had to be at least “good enough” at doing the thing.

That assumption held for decades. It collapsed in about eighteen months.

The execution layer dissolved

AI didn’t just lower the barrier to execution. It removed it for entire categories of work.

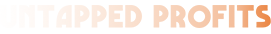

Think about what that means for shadow skills—abilities you’ve carried for years but couldn’t deploy because deploying them meant becoming someone else entirely.

That killer eye for design you shelved because learning front-end development felt like a second career? You can build with it now. That ear for music you never trained into production skills? You can produce with it now. That instinct for what makes a good story, trapped because you couldn’t write 2,000 polished words in a sitting? The bottleneck is gone.

You’ve felt this. Maybe you haven’t named it yet, but you’ve felt it—that moment where something you’d given up on suddenly felt possible again.

The distance between “I can see it” and “I can ship it” used to be measured in years. Now it’s measured in prompts.

But here’s where most people get the story wrong.

The new bottleneck isn't what you think

The popular narrative says AI democratised skill. Everyone can do everything now. The playing field is level.

It’s not. It shifted.

The old stack rewarded execution competence: can you do this? The new stack rewards curatorial judgment: do you know what’s worth doing and whose thinking to build on?

Go back to those two people at the same screen. Person A typed a generic prompt because they didn’t know what good looked like. Person B built an entire architecture around borrowed genius—they studied a specific expert’s methodology, identified which elements would translate, and designed a system that directed AI to stand on those shoulders.

You’ve seen the gap yourself. Scroll through LinkedIn for five minutes and you can smell the difference between AI-assisted content with a human driving and AI content with nobody at the wheel.

Person B’s advantage isn’t technical skill. It’s taste. It’s knowing that D’Souza’s “Wall” technique—placing a major objection at the 40% mark of any article—creates the tension that keeps readers scrolling. It’s recognising that a “Sandwich Closure” where your ending mirrors your opening creates psychological resolution that a generic conclusion never will.

None of that is execution. All of it is judgment.

The judgment stack

Here’s what the new skill stack actually looks like:

Layer 1: Domain taste. You understand quality in your field. Not theory—you can look at two outputs and instantly know which one works and why. This comes from exposure, not production. Years of reading great copy teaches you to recognise great copy, even if you never wrote a headline professionally.

Layer 2: Curatorial research. You know whose shoulders are worth standing on. Not every expert is equal. Not every framework survives contact with real work. The skill is identifying which thinkers, which methodologies, which mental models actually produce results when applied—and which ones just sound good in a LinkedIn post.

Layer 3: System design. You can translate borrowed genius into repeatable processes. This is the gap between “I read a great book” and “I built a production workflow based on that book’s principles.” Person B didn’t just read D’Souza. They turned his architecture into a checklist that runs every time.

Layer 4: AI direction. You can instruct AI precisely enough to execute at the level your taste demands. This isn’t prompt engineering in the parlour-trick sense. It’s the ability to break your judgment into instructions specific enough that the output matches your mental model of quality.

Notice what’s missing from that stack: the ability to physically produce the work yourself.

That used to be non-negotiable. Now it’s optional.

"But anyone can fake expertise with AI"

This is the objection worth taking seriously. Because on the surface, it looks true.

Someone with zero marketing knowledge can ask AI to write a marketing strategy. Someone with no design sense can generate fifty logos in an hour. A person who’s never written a line of copy can produce a 3,000-word article in minutes.

And all of it will be mediocre.

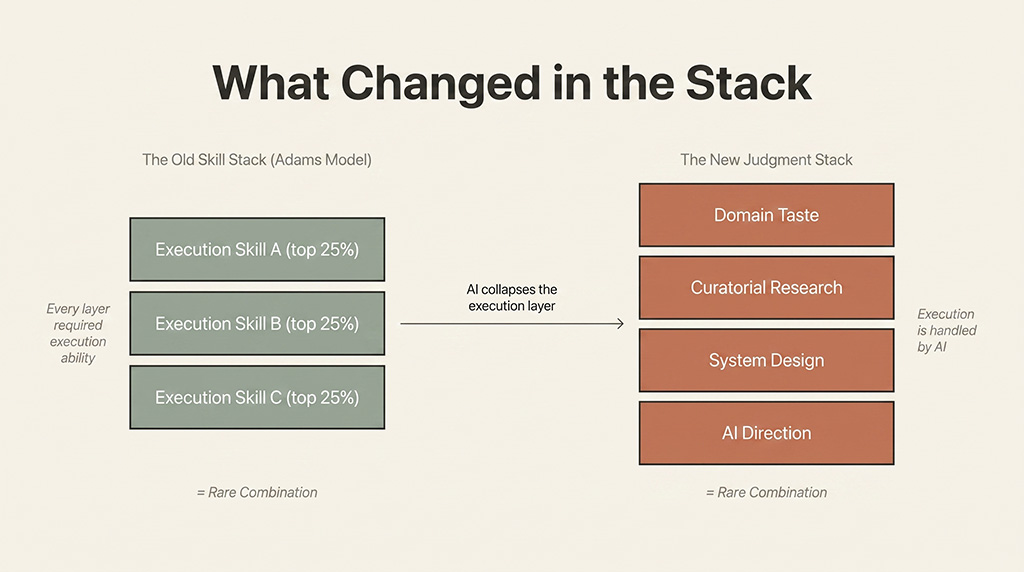

MIT research from early 2025 put a number on this: AI could handle roughly 65% of text-based tasks at a “minimally acceptable” level. Minimally acceptable. Not good. Not sharp. Not the kind of work that makes a client lean forward in their chair. The kind of work that technically exists.

This is what the “AI replaces everything” crowd misses entirely. AI without judgment produces competent-looking garbage. It passes the glance test. It fools people who don’t know the domain. But anyone with actual taste spots it in seconds—the generic structure, the safe choices, the absence of a point of view. You’ve seen it yourself. Your inbox is full of it. Your LinkedIn feed reeks of it.

The person who types “write me a blog post” gets something that looks like a blog post the same way a wax apple looks like fruit. Structurally correct. Nutritionally empty.

The person who built a five-phase system—with a pre-flight analysis that identifies the single strongest angle, a structural audit based on proven narrative architecture, a humanity pass that strips out every AI tell—gets something that reads like a person with fifteen years of experience wrote it.

Both used the same tool. The difference is everything that happened before they opened it.

It’s the difference between handing a Stradivarius to someone who’s never held a violin and handing it to someone who’s studied under three master teachers. Same instrument. The music isn’t even comparable.

PwC’s 2025 AI Jobs Barometer found that employer skill demands are shifting 66% faster in AI-exposed roles than elsewhere. The skills rising fastest? Not technical prompting. Judgment. Evaluation. Quality assessment. The market is already pricing in what most people haven’t figured out yet: the tool isn’t the advantage. The person directing it is.

Where deep skill still matters

This isn’t a story about shortcuts replacing work. Shadow skills aren’t skills you never developed at all—they’re skills you developed but couldn’t deploy.

The designer who shelved their eye for visual hierarchy didn’t lack ability. They lacked the technical execution layer to ship what they could see. AI provides that layer.

But someone who’s never noticed visual hierarchy? AI can’t give them an eye they never trained.

The new model still demands investment. The investment just shifted. Instead of spending three years learning Illustrator so you can execute your design taste, you spend three weeks studying what makes design effective so your taste sharpens. Instead of a coding bootcamp, you study enough programming logic to direct AI precisely. You know the principle—work smarter, not harder. This is the 2026 version of it.

The hours don’t disappear. They migrate from execution training to judgment training.

And judgment training looks different. It’s reading D’Souza instead of learning WordPress. It’s studying Cialdini’s persuasion frameworks instead of memorising email platform features. It’s developing curatorial taste—the ability to scan a hundred possible approaches and identify the three worth testing.

The question worth sitting with

Two people will finish reading this article. Same screen. Same moment.

One will think: that’s interesting. Then they’ll open AI and type something generic and wonder why the output feels flat.

The other will ask a different question: whose shoulders should I be standing on? They’ll identify the expert worth studying, the methodology worth adapting, the shadow skill worth finally unlocking—and they’ll build a system that turns borrowed genius into their own competitive edge.

The tool is the same. It was always the same.

The stack changed.

Your shadow skills aren’t dead weight-they’re unlocked inventory. The question is whether you have the judgment architecture to deploy them. If you want help building that system for your marketing, we should talk.