Jordan Tice picked up a guitar at twelve. By the time he graduated high school, he was touring the mid-Atlantic with professional bluegrass musicians and had signed his first record deal.

Cal Newport picked up a guitar at twelve too. By the time he graduated high school, he could play a few hundred songs, had performed at school talent shows, and had reached exactly the level of ability you’d expect from six years of serious effort.

Same instrument. Same starting age. Radically different outcomes.

Newport—who went on to write So Good They Can’t Ignore You—spent years trying to understand that gap. What he found wasn’t about talent, natural ability, or even raw hours. It was about something far more uncomfortable: what Tice did with his time that Newport didn’t.

Tice practiced. Newport played.

The Number That Should Worry Every Marketing Team

In 2005, a research team led by psychologist Neil Charness at Florida State University published the results of a decades-long study into chess players. His team surveyed over four hundred ranked players, mapping their full development histories—when they started, how they trained, how many tournaments they played, how much time they spent in structured study.

Here’s what they found: grand masters and intermediate-level players had logged roughly the same total hours. The 10,000-hour rule, popularised by Malcolm Gladwell, treats all hours as equivalent. Charness’s data showed they aren’t.

Grand masters dedicated around 5,000 of their 10,000 hours to serious study—working through problems, finding weaknesses, eliminating them. Intermediate players dedicated around 1,000 hours to serious study. They spent the remaining 9,000 hours playing tournament chess.

Five times more serious study. That’s the entire gap between good and exceptional.

Tournament chess—competitive play under real conditions—feels like practice. You’re doing the thing. You’re engaged. You’re getting results. But the Charness data is unambiguous: tournament play, by itself, doesn’t make you better. It makes you experienced. Those are not the same thing.

Now ask yourself: what does your marketing team’s version of “tournament chess” look like?

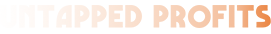

What Marketing Teams Actually Do

A campaign brief goes out. The team builds creative. Media gets planned and bought. Everything launches on schedule. Four weeks later, someone pulls the numbers, the team reviews performance in a Monday meeting, notes what worked, and begins planning the next campaign.

This is tournament chess. You are doing the thing, under real conditions, getting real results. And after five years of it, your team is experienced, confident, and roughly as skilled as they were after year two.

The experience accumulates. The skill plateaus.

Newport describes watching Tice practice a new guitar passage. Tice would set the tempo just slightly faster than he could comfortably manage—not so fast the technique broke down entirely, just fast enough that he couldn’t quite make it work. He would play until he hit a wrong note. Stop immediately. Start again from the beginning. He would repeat this, sometimes for hours, until the passage was clean at that speed. Then he’d push the tempo up again.

“It’s a physical and mental exercise,” Tice explained. “You’re trying to keep track of different melodies and things.”

There’s a specific kind of discomfort in that process. Newport admits he hated it as a teenager—the mental strain of working through something not yet ingrained in muscle memory. So he stuck to songs he already knew. He got very good at playing things he could already play.

Most marketing teams are doing the same thing.

The Difference Between Reviewing and Practicing

Here’s where most marketers push back: we do analyse our campaigns. We look at what worked and do more of it.

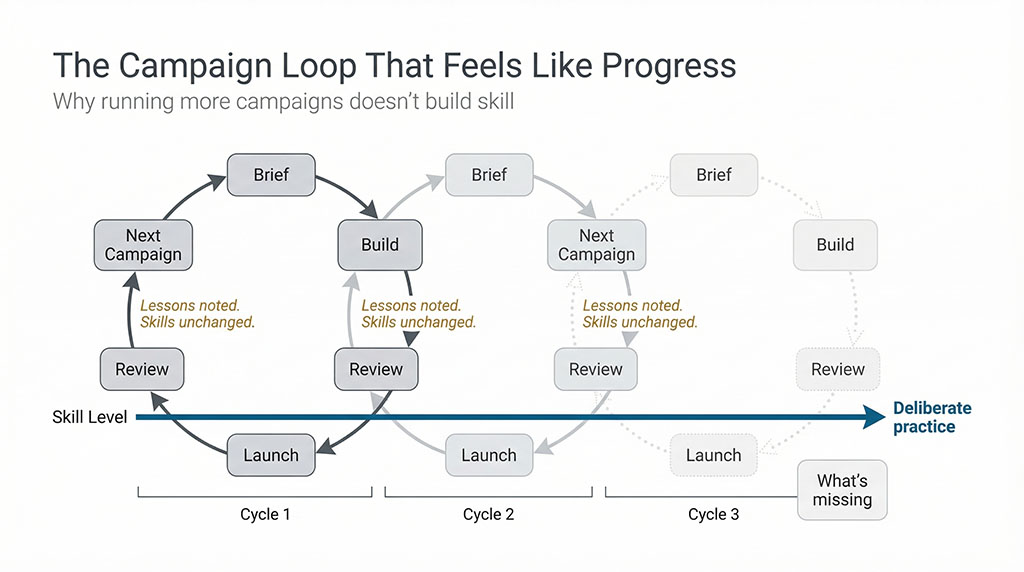

This is reviewing outcomes. It is not deliberate practice. The difference matters enormously.

Reviewing outcomes tells you what happened. Deliberate practice isolates why, targets the specific weakness, and creates a structured repetition with immediate feedback until that weakness is gone.

Newport applies the chess research to knowledge work: “If you just show up and work hard, you’ll soon hit a performance plateau beyond which you fail to get any better.” He’s not describing lazy teams. He’s describing competent, hardworking teams who have optimised for doing the work—and never built a system for improving at it.

Tice didn’t just know that he’d missed a note. He stopped the moment he missed it. He didn’t finish the passage and reflect afterwards. Immediate feedback. Immediate correction. Immediate repetition.

In your last campaign debrief, how many hours did your team spend on why the headline underperformed versus what they’d write differently next time? How many iterations did they run on that specific weakness before the next campaign launched? Did anyone isolate the single variable that drove the performance gap and then deliberately practice improving it?

Or did you review the numbers, note the lessons, and move on to the next campaign?

What Deliberate Practice Actually Looks Like in Marketing

Newport is precise about what qualifies. Deliberate practice has three non-negotiable components: the activity must stretch you past your current comfort zone, it must be designed to improve a specific weakness, and feedback must be immediate—not quarterly.

Here’s what this looks like applied:

A copywriter who wants to improve headline performance doesn’t write better headlines by writing more campaigns. They write twenty headline variations for the same brief—deliberately pushing past the point where they feel confident—then run them against each other with the explicit goal of understanding the mechanism, not just recording the winner. They study the five that beat their control until they can articulate exactly why. They then write twenty more, applying that understanding, and test again.

That’s deliberate practice. It’s uncomfortable. It produces no immediately shippable campaign. Most teams won’t do it because it doesn’t look like output.

A media buyer who wants to improve audience targeting doesn’t improve by running more campaigns to more audiences. They pick one campaign, isolate one audience variable, and run the test with the explicit intent of understanding that specific variable’s effect—not to hit a quarterly performance target, but to add a new capability to what they know. They chase the feedback loop until it closes.

Newport notes that Anders Ericsson, who coined the term deliberate practice, found the same pattern across chess, medicine, music, sports, and computer programming: “Most individuals who start as active professionals change their behaviour and increase their performance for a limited time until they reach an acceptable level. Beyond this point, further improvements appear to be unpredictable.”

They plateau. Not because they stop working. Because they stop practicing.

"We Don't Have Time for This"

Most marketing teams run at campaign cadence. There’s always a launch coming, a deadline approaching, a result expected by end of quarter. The idea of pulling a senior copywriter off campaign production to run twenty headline iterations that won’t ship feels like a luxury for companies with bigger teams and slower pipelines.

This is the right objection. It’s also exactly what the intermediate chess players told themselves about serious study.

Tournament play feels productive. It produces outcomes. It generates numbers to report. Deliberate practice produces nothing shippable—just capability, quietly accumulating, until the gap between your team and the market becomes obvious in both directions.

Newport doesn’t argue for abandoning output. He argues for treating a small, protected portion of working time as practice—explicitly designed, specifically targeted at known weaknesses, with feedback structured to close fast. Not instead of campaigns. Alongside them.

The question isn’t whether you have time. The question is whether experience and skill have quietly drifted apart on your team—and how long you’re prepared to let the gap widen before you notice.

Jordan Tice, halfway through an interview about his practice routine, picked up his guitar and knocked off a solo from an Allman Brothers record he’d learned by ear as a teenager. “Great melody,” he said. He still had it, note-perfect, a decade later.

That’s what deliberate practice actually builds: something that doesn’t erode when the conditions change, the platform algorithm updates, or the market shifts. Not campaign performance. Capability.

The next campaign your team runs will produce results either way. The question is whether anyone on the team will be better at marketing when it’s finished.

Before you can build deliberate practice into your marketing, you need a clear picture of what’s actually working and where the real skill gaps are. Most teams can’t get that picture because their data is scattered across channels with no one reading all of it. An Agency Waste Audit changes that—pulling everything into one view so you know exactly what to practice. [Book yours here →]