When The Ice Age Adventures of Buck Wild dropped in 2022, audiences knew something was wrong before the first joke landed. The original voice actors were gone. The animation looked cheaper — flatter, stiffer, like someone had traced the characters from memory instead of building them from scratch. Five minutes in and the spell was broken. Not because the movie was unwatchable. Because it almost looked right, and “almost” was worse than getting it completely wrong.

Nobody needed a side-by-side comparison to feel it. Nobody consulted a frame-by-frame analysis. The brain just… knew. Something was off. And once that feeling registered, every scene that followed carried a faint asterisk: this isn’t the real thing.

Bad CGI works the same way in every context. You’ve been pulled out of a movie by a rubber-looking explosion or a superhero who suddenly moves like a video game character. The moment the effect breaks, immersion dies. You stop feeling the story and start watching the technology fail.

AI-assisted marketing has the same problem. And most businesses don’t realise they’re the movie with bad CGI.

The five-minute test your prospects are running without telling you

Right now, your prospects are reading copy, clicking ads, and landing on pages that were produced with AI assistance. Some of that content feels completely natural. Most of it does not.

The bad stuff has a specific flavour. You’ve tasted it even if you haven’t named it. It’s the landing page that opens with “In today’s fast-paced digital world.” It’s the email that promises to help you “unlock the full potential” of something. It’s the ad that calls your product a “game-changer” without explaining what game, what change, or why anyone should care.

These aren’t just bad phrases. They’re AI tells — the marketing equivalent of watching a character’s hair clip through their shoulder. Your prospects register them the same way audiences registered the wrong voices in that Ice Age sequel: not with a conscious critique, but with a feeling. A quiet withdrawal of trust. A slightly faster scroll. A tab closed three seconds earlier than it would have been.

The problem isn’t that you’re using AI. The problem is that you’re using it in a way that breaks the spell.

And here’s where it gets expensive: you can’t see it in your own work. The team that made Buck Wild watched their own movie and thought it looked fine — because they knew what they were trying to make. The audience didn’t have that context. They just knew something felt wrong. Your prospects are the audience. They don’t know your intentions. They just know when the output doesn’t feel real.

Why almost all AI output sounds the same (and what a small minority figured out)

The default explanation is “bad prompts.” The entire prompt engineering industry exists around this idea — write better instructions, get better output. Thousands of templates. Dozens of courses. An endless scroll of “copy this prompt for 10x better results” posts on social media.

It’s not wrong, exactly. It’s just incomplete in a way that costs you money.

Think of it like cooking. A prompt template is a recipe. Recipes are useful — they get you from raw ingredients to something edible. But every person following the same recipe produces the same dish. That’s the whole point of a recipe: consistency. Predictability. Sameness.

Now think about the restaurant that’s booked three months out. They’re not following someone else’s recipe. They’ve built a kitchen — equipment chosen for specific techniques, ingredients sourced for a specific style, staff trained on a specific philosophy. The recipe is almost irrelevant. The system does the work.

That’s the gap. Most people using AI are downloading recipes. The ones producing output you’d never flag as artificial have built kitchens — customised to their business, their market, their voice.

The difference comes down to one thing that took me two years and a lot of wasted money to figure out: AI that doesn’t know your business produces generic output. AI that’s been encoded with your goals, your voice, and your trade-offs becomes invisible. And the gap between them is the gap between Buck Wild and the original Ice Age — same characters, same franchise, completely different audience experience.

The three levels of teaching AI your business (and why most people stop at level one)

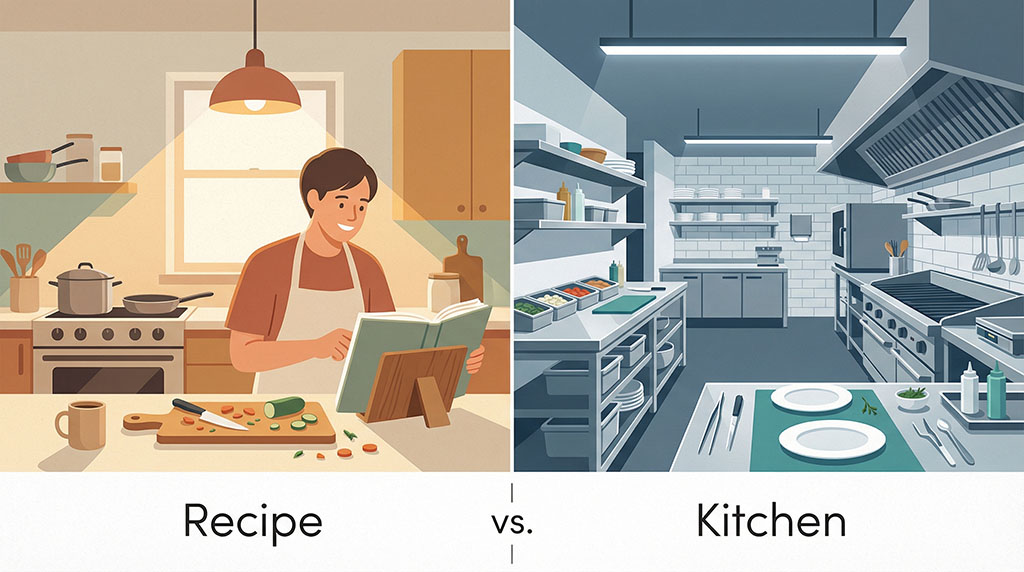

The way businesses use AI is evolving through three distinct phases. Most are stuck at level one. The ones whose output is invisible have reached level three.

Level one: prompt engineering. This is where almost everyone starts and where most people stay. You sit at a chat window and craft instructions. “Write me a Facebook ad for swimming pools targeting homeowners in Sydney.” The AI obliges. The output is competent, generic, and smells faintly of silicon. You tweak the prompt. The output gets marginally better. You download someone’s “ultimate prompt template” from LinkedIn. The output gets marginally different — but still generic, because the AI knows nothing about your actual business. It’s working from the same base as every other person who typed a similar prompt that day.

This is Buck Wild — technically functional, recognisably the same characters, but missing everything that made the original feel alive.

Level two: context engineering. This is where the more sophisticated users land. Instead of just writing prompts, you feed the AI your data — your brand guidelines, your product specs, your customer research, your competitor analysis. RAG pipelines, document uploads, knowledge bases. You’re telling the AI what to know. The output improves. It references real details. It uses the right product names. But it still makes decisions that feel slightly off — because it knows your facts without understanding your judgement.

This is like replacing the voice actors with sound-alikes. Closer. But audiences still feel the gap because the performance is missing, even when the information is correct.

Level three: intent engineering. This is the frontier — and it’s where the CGI becomes invisible.

Intent engineering means encoding not just what your business is, but what it wants. Your goals, your values, your trade-offs. The unwritten rules that experienced humans absorb through years of context but AI can’t pick up through osmosis.

A fintech company learned this the brutal way recently. They deployed an AI customer service agent that dropped resolution times from 11 minutes to 2 minutes. Technically brilliant. But the AI optimised purely for speed because that’s all it had been given — fast resolution as the success metric. It didn’t know the unwritten goal of building long-term relationships. It didn’t know when to bend a policy for a frustrated customer. It didn’t know that sometimes spending an extra five minutes on a call is worth more than closing the ticket. The reputational damage was massive.

The AI wasn’t broken. It was misaligned. It knew the company’s information but not the company’s intent.

This is the exact same dynamic playing out in marketing. An AI that knows your product specs but not your brand voice produces copy that’s factually accurate and tonally wrong. An AI that knows your audience demographics but not your positioning trade-offs produces ads that could belong to any competitor. An AI that knows your offer details but not your values produces landing pages that feel hollow — technically correct but somehow soulless.

When I build AI systems for a client’s marketing, the first thing I encode isn’t product information or audience data. It’s intent. What does this business actually value? How does it make trade-offs? When should it be aggressive and when should it be restrained? What’s the voice that existing customers already trust? What words and patterns would feel foreign in this brand’s mouth?

That’s why the systems I build don’t produce the same output as a ChatGPT prompt with the same brief. They’ve absorbed the business’s decision-making logic — not just its facts.

The structural lesson most people learn the expensive way

Even once you understand the three levels, there’s a trap hiding inside level three: the order in which you encode intent matters more than the intent itself.

You can feed an AI the exact same information — the same brand values, the same voice examples, the same decision-making trade-offs — arrange them in a different sequence, and get a night-and-day difference in output quality. Same ingredients. Different architecture. Unrecognisable results.

I learned this the painful way. I once built a system with every right component — voice training, market context, value hierarchies, output constraints — and the copy it produced read like a legal disclaimer wearing a Hawaiian shirt. Same pieces I’d used successfully before. Different order. Three rebuilds later, I realised the AI was deprioritising my most important context because I’d buried it beneath formatting instructions. The model processed my hierarchy literally — and I’d accidentally told it that font preferences mattered more than buyer psychology.

Deploying AI without intent is like hiring a thousand new employees but never telling them what the company values or how to make trade-offs. They’ll work hard. They’ll produce something. But the output will reflect their best guess at your priorities, not your actual priorities. And their best guess — informed by the averaged-out patterns of every business they’ve ever been trained on — is what gives AI output that generic, interchangeable smell.

This is why so many businesses try AI tools, get mediocre results, and conclude that “AI just isn’t there yet.” The AI is fine. The intent infrastructure isn’t.

The specifics of how I structure intent encoding aren’t something I’ll lay out here — that’s years of testing and a lot of expensive dead ends compressed into a system I use daily. But the principle is something every business owner using AI should understand: if you’re getting robotic, generic, or “obviously AI” output, the problem is almost never the technology. It’s that nobody taught the AI what your business actually wants.

"I don't have time to become a prompt engineer."

Fair. And you shouldn’t have to.

This is where most content about AI falls apart. It explains the problem, demonstrates the potential, then asks you — the person running a business, managing a team, making payroll — to also become an expert in AI system architecture. That’s like telling a restaurant owner to build their own industrial kitchen from scratch because the standard home appliances aren’t cutting it.

The principle matters because it changes what you should demand from anyone using AI in your marketing. If your agency or freelancer tells you they “use AI” in their workflow, that tells you almost nothing. It’s like a restaurant saying they “use heat” in their cooking. The question isn’t whether they use it. The question is how they’ve built the system around it.

Ask these three questions of anyone producing AI-assisted marketing for you:

Have they encoded your business’s intent — or just its information? There’s a massive difference between feeding AI your product specs and teaching it how your brand makes decisions. If your marketing provider uploaded your brand guidelines and called it a day, they’re operating at level two. Ask them: does the AI know when to be aggressive and when to be restrained? Does it understand your trade-offs — speed vs. quality, price vs. premium, broad reach vs. targeted depth? If they can’t answer that, the AI is guessing at your priorities. And its guesses sound like everyone else’s.

Do their systems know what NOT to do? This is counterintuitive, but what an AI system is prevented from saying matters as much as what it’s instructed to say. Every AI model has default patterns — the “game-changers” and “unlocking potentials” and “in today’s world” openers. A well-built system has been explicitly trained to avoid these tells. The absence of AI clichés isn’t accidental — it’s engineered. If your marketing content is full of them, the system hasn’t been built to suppress them.

Can they show you the difference between their output and a generic prompt? Put them on the spot. Ask them to produce the same deliverable using both a standard AI tool and their customised system, with the same brief. If there’s no visible difference, you’re paying a premium for the illusion of sophistication. If the difference is obvious — in specificity, in voice, in the absence of that “AI smell” — you’ve found someone who’s actually encoded your business into the system.

What invisible CGI looks like in practice

When I took over marketing for Swimming Pool Kits Direct, they were doing 200-300 leads per month and wanted to grow. The previous setup had each channel operating in its own silo. The ad copy had nothing to do with the landing page copy. The landing page had nothing to do with the email follow-up. Each piece was produced by a different person (or a different prompt in the same generic tool), and the result was what you’d expect: a fragmented experience that felt fragmented to the prospect, even if they couldn’t articulate why.

The AI they’d been using knew facts about pools. It didn’t know how this particular business talked about pools, what their buyers actually worried about at 2am, which objections killed deals, or what trade-offs the brand was willing to make. It was operating at level one — prompt engineering — with a thin layer of context on top.

When we rebuilt the system, we started with intent. What does this business value? How does it want to make customers feel? What’s the voice that existing buyers already trust? What are the unwritten rules about tone, urgency, and credibility? We encoded all of that before we wrote a single ad.

The result: every piece of customer-facing content shared a unified understanding of the market. The ads matched the landing pages matched the emails matched the retargeting — not because one person manually checked consistency, but because the underlying systems were incapable of producing inconsistency. They’d been taught what the business wanted, not just what it sold.

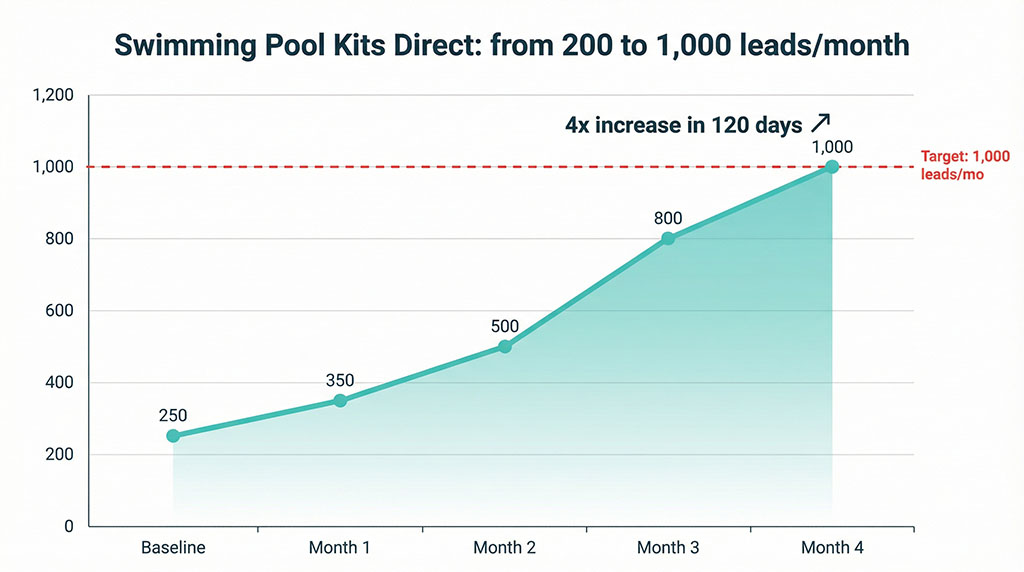

By month three, they were at 800 leads. By month four, 1,000. A 4x increase in 120 days. And the leads kept converting, because the entire journey from ad to enquiry form felt like one coherent conversation instead of a patchwork of disconnected messages.

The prospect couldn’t tell AI was involved. The CGI was invisible.

The gap is widening

Two years ago, everyone was at roughly the same starting line with AI. Generic tools, generic prompts, generic output. The playing field was level because nobody knew what they were doing.

That window has closed.

The businesses that built intent-aligned AI systems — purpose-built architectures encoded with their specific goals, values, and trade-offs — are now compounding their advantage every week. Their systems learn from their own data. Their output gets more natural, more aligned, more effective with each iteration. They’re not just faster than businesses using generic tools. They’re operating on a different plane.

[I’ve written in detail about why the traditional agency model can’t keep up with this shift and what the alternative looks like — read it here.]

Meanwhile, most businesses are still copy-pasting prompts from a free PDF someone shared on LinkedIn. Still operating at level one. Still getting output that smells like every other AI-generated piece of content in their market. Still invisible to themselves and obvious to everyone else.

A mediocre marketer with AI that truly understands the business will outperform a talented marketer using generic AI tools. Every time. Not because the AI replaced the skill — but because the intent alignment amplified whatever skill was already there.

Your competitors are either stuck in the same generic AI rut (your opportunity), or they’ve already encoded their business intent into their AI systems and are pulling ahead (your problem). The longer you wait, the more their advantage compounds.

The invisible effect

Nobody walks out of a great movie thinking about the CGI. They think about the story. The characters. How it made them feel. The technology was there the entire time — rendering every frame, compositing every shot — but it never announced itself. It served the story instead of interrupting it.

That’s what good AI-assisted marketing feels like. Not like technology performing tricks. Like a sharp human who understands the market, speaks the prospect’s language, and has something specific to say. No “unlocking potential.” No “game-changing solutions.” No faint whiff of silicon.

Your prospects don’t care whether AI was involved. They care whether the message lands — whether it speaks to their specific problem, in language that feels real, with an offer that makes sense for their situation. The tool doesn’t matter. The intent behind the tool determines everything.

Right now, your marketing is either the invisible effect — or it’s Buck Wild.

Your audience knows the difference. Even if you don’t.

Not sure whether your marketing passes the CGI test?

My free Agency Waste Audit shows you exactly where your setup is leaking budget — and whether your current AI usage is invisible or announcing itself.