He’s staring at the dashboard. Numbers are green. ROAS is acceptable. The agency report landed in his inbox this morning with three highlighted wins and a confident subject line.

“Must be working,” he says.

Clyde Bedell heard that sentence in 1964 and called it what it was: the single most expensive lie in advertising.

The professional who invented the standard

Bedell spent forty years studying why some ads produce results and others produce maximum results. He ran clinics across the United States, Europe, Asia, and Australia. He taught merchants, agency people, newspaper publishers. And everywhere he went, he encountered the same character: the weak ad creator who becomes “instantly defensive when you begin to talk about how good ads ought to be.”

The script never changed. We got response from this ad, it must be pretty good. We didn’t expect a lot of direct response — we just wanted to sort of create a store image.

Bedell’s verdict was blunt: “All this is baloney.”

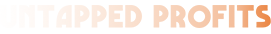

The professional, he argued, doesn’t want just response. The professional insists on maximum response. And the distance between those two standards — between “some results” and “maximum results” — is not a gap in budget or creative talent. It’s a gap in mindset. One that costs businesses far more than they ever calculate, because you can’t calculate what you never thought to measure.

Why "some results" is the most dangerous outcome in advertising

“Some results” are real. The numbers aren’t fake. The campaign did produce something. And that’s precisely what makes the rationalisation so seductive — and so damaging.

When results exist, the instinct is to protect them. Question the campaign and you’re questioning the decision to run it. Question the agency and you’re questioning your own judgment in hiring them. Question the ROAS and you’re questioning the whole framework you’ve been using to feel competent.

So most people don’t question it. They adjust around the edges. They test a new headline. They tweak the bid. They produce a monthly report that shows incremental improvement over last month, which showed incremental improvement over the month before.

Progress. Measurable progress. Against a baseline that was never challenged.

Bedell had a name for this too. He called it “getting results” versus “getting maximum results” — and he was almost angry about the confusion between the two. The best ad creators, he wrote, don’t use ads to build a store image or generate some traffic or keep the brand visible. They create ads “to sell merchandise and to make a great many people happy that they came to that store to buy.” That’s the only legitimate target. Everything else is rationalisation dressed up in reporting software.

The dashboard won't show you the gap

Now here’s where most readers hit a wall — and I want to name it before you do.

You’re thinking: I measure everything. I have dashboards, attribution models, weekly reporting. If there was a performance ceiling I hadn’t found, the data would show it.

That’s the trap. And it’s the most sophisticated version of the same rationalisation Bedell identified sixty years ago.

The data shows you what you’re measuring. It cannot show you what you haven’t thought to ask. A maximum results standard doesn’t live in the metrics — it lives in the questions that precede them. What would this account look like if we assumed the current performance was the floor, not the target? What would we have to believe was true for these numbers to be considered mediocre? What if the constraint isn’t budget, or creative, or audience size — but the standard we applied when we set this up?

Those are not questions a “some results” mindset generates. They feel reckless. Possibly ungrateful. You have green numbers. Why are you looking for problems?

Because maximum results and some results look completely identical on a dashboard. The gap between them is not visible in the data. It’s visible only in the professional standard of the person reading it.

Two species, not two skill levels

Bedell described the weak ad creator and the professional ad creator as two different species, not two different skill levels. The weak creator is satisfied by response. The professional is dissatisfied by anything short of maximum response — and that dissatisfaction is not neurotic or perfectionist. It’s the job.

This is a harder standard to hold than it sounds. “Some results” comes with social proof. The agency is confident. The numbers are green. Everyone in the room is nodding. Pushing for maximum results in that environment requires a specific kind of professional stubbornness — the willingness to ask, out loud, whether the campaign that’s working is working as hard as it possibly can.

Not harder than last month. As hard as it can.

That question makes people uncomfortable. It should. It’s the most important question in advertising — and it’s the one most rarely asked.

The one question to ask before every campaign review

The practical application is simpler than the philosophy.

Before you review any campaign, any account, any monthly report, ask one question first: What would maximum results look like for this, and how would I know if we were there?

If you can’t answer that — if the only benchmark is “better than last month” — you don’t have a performance standard. You have a trend line. And trend lines will tell you whether you’re improving. They will never tell you whether you’re near the ceiling or nowhere close to it.

That’s the work Bedell was describing. Not smarter creative. Not bigger budgets. Not better targeting. The prior question: what does maximum look like, and are we measuring against that?

The man staring at the green dashboard is still there. Numbers still look good. Report still landed with three highlighted wins.

The only thing that’s changed is the question he’s about to ask.

Not “is this working?”

“Is this working as hard as it can?”

Those two questions produce different answers. Different investigations. Different agencies, sometimes. Different standards, always.

One of them leads to results.

The other leads to maximum results.

Sixty years on, Bedell’s point stands: the distance between them is not a budget problem. It’s a mindset problem. And mindset problems are the only ones you can fix for free.

Find out if your campaigns are at the ceiling — or nowhere near it

The Agency Waste Audit starts with one question: what would maximum results actually look like for your business? Most accounts we review have never been asked that question. Takes 30 minutes.