The campaign cost $47,000 to produce and six weeks to build. The creative brief was tight, the concept was strong, the video looked genuinely cinematic. The agency presented it on a Friday afternoon with slides that used words like “brand story” and “emotional resonance.” Everyone in the room felt good about it.

It went live on a Tuesday. By day eleven, the CTR was 0.4%. Cost per click: $18.40. The algorithm had decided it wasn’t worth showing. There was nothing left to test—one execution, one bet, $47,000 locked in. Three weeks remained in the quarter.

This is not a story about a bad agency. It’s a story about a structural problem that most business owners don’t see until it’s too late. The creative wasn’t the problem. The model was—brief to concept to approval to launch, a process designed for a media environment that no longer exists.

The Doctrine That Made Sense (And Still Has Its Champions)

The advertising industry was built around a single organizing principle: the Big Idea wins. Find the one concept that captures your brand’s truth, execute it with precision, spend heavily behind it, and let time do the rest.

This doctrine made sense for fifty years. When you were buying television airtime or magazine spreads, you had one shot. Distribution was expensive, production was expensive, your window was fixed. Under those conditions, the right answer really was to slow down, get the idea right before launch, and commit. Volkswagen’s “Think Small” was the product of Helmut Krone and Julian Koenig iterating obsessively—but internally, behind closed doors, not in the live market. The winners were the agencies that got the idea right before the work went out.

The logic cascaded through the entire industry. Agency structures, approval processes, brand guidelines, six-week production cycles—all of it was designed to protect the Big Idea from the market until it was ready.

The problem is that the market changed while the doctrine didn’t.

What Actually Drives Performance (The Number Nobody Talks About)

Before getting to what changed, it’s worth establishing what we’re actually trying to get right. Because most business owners are optimizing for the wrong variable.

Ask ten business owners what drives campaign performance and you’ll hear: targeting, audience selection, spend, the algorithm. The research says something different. NCSolutions’ meta-analysis of 450 campaigns—one of the most rigorous advertising effectiveness studies ever conducted—found that creative drives 49% of a campaign’s incremental sales impact. Targeting contributes 11%. Reach, 14%. Google’s own research puts creative’s share of campaign success at 70%.

The creative is the campaign. Everything else is distribution.

Here’s the brutal implication: if creative quality is responsible for 49 to 70% of your results, and if—as a study by Byron Sharp’s team at the Ehrenberg-Bass Institute found—professional marketers can accurately predict which ads will perform only 51% of the time (no better than a coin flip), then the only reliable strategy is to test enough variants to find what actually works, rather than bet on one team’s gut instinct.

Most businesses are running somewhere between 8 and 20 creative variants per month. Of those, approximately 6.6% will become genuinely scalable winners.

Do the math. At 10 creatives per month, you find your winner roughly once every fifteen months.

The Model Is Broken, and the Numbers Prove It

The Big Idea doctrine was built on the premise that getting the idea right before launch was worth more than the cost of being wrong after. That calculus made sense when production cost $15,000 and the campaign was already locked into print placements for six months.

It doesn’t make sense anymore.

Meta’s average cost per ad increased 14% year-over-year in 2024. Search cost-per-click climbed across 86% of industries in the same period. Meanwhile, 87% of senior marketing decision-makers report significant campaign performance issues—most terminating campaigns prematurely not because the idea was wrong, but because they committed all their budget to a handful of untested assets before they had any performance data at all.

Up to 80% of marketing budgets get allocated to specific campaigns before meaningful data exists. The agency production cycle runs four to eight weeks. Video alone stretches to ten or more. By the time you know whether your creative works, the quarter is half over and the budget is spent.

You’re flying the plane after the destination has already been locked in.

The Economics That Changed the Rules

The reason the old model survived this long is that high-velocity testing wasn’t affordable for most businesses. One professionally produced video asset runs $1,000 to $5,000 for basic execution—$15,000 to $30,000 for anything agency-led. A senior in-house designer, fully loaded, produces 15 to 25 polished assets per month at roughly $350 to $400 per piece.

At those prices, testing 100 creative variants wasn’t a strategy. It was a budget conversation.

AI creative platforms broke that math. The per-asset cost now runs roughly $1.20 to $3.90 for static variants. AI-generated video has dropped from thousands of dollars per execution to $50 to $200. The cost differential is approximately 50 to 100 times per variant.

Practically speaking: the financial risk of a creative test has essentially disappeared. You can now afford to test highly specific messaging angles, niche audience appeals, and unconventional visual approaches that were economically unviable at $1,500 per variant but are rational experiments at $2.50.

The bottleneck has shifted from “can we afford to test this” to “do we have the strategic judgment to know what to test.” That is a fundamentally different problem—and one that business owners who know their customers are far better positioned to solve than they’ve been given credit for.

The Algorithm Is Already Rewarding the Brands That Figured This Out

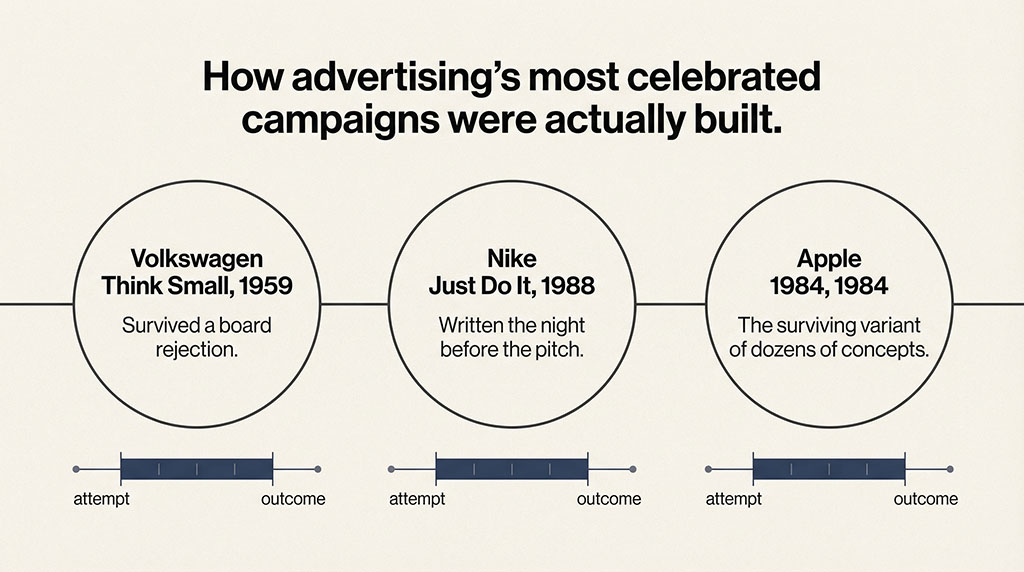

The platform data on this is specific. Meta’s analytics team published an experimentally validated study across roughly 26,000 cases showing that at four repeated exposures to the same creative, conversion likelihood drops approximately 45%. Once ad frequency exceeds 3.0, engagement falls 20 to 30% per week. Creative fatigue on Meta begins within 5 to 7 days for cold traffic audiences. On TikTok, it starts in 3 to 5 days.

The platforms are not patient. They want fresh creative constantly, and they penalize accounts that don’t provide it.

The minimum velocity threshold to prevent CAC inflation is approximately 0.8 new creatives per $10,000 of weekly ad spend. The working range is 1.5 to 3.0. For a business spending $20,000 per month on paid social, that means 12 to 24 genuinely new creative variants, every month. Not per quarter. Per month.

For context: an agency retainer delivering 30 to 40 creatives monthly is often cycling minor variations on a single approved concept. Meta’s underlying retrieval engine now uses the creative asset itself as the primary targeting mechanism—it evaluates for genuine signal diversity. Swapping a headline or changing a background color doesn’t register as a distinct creative. The algorithm wants 8 to 12 fundamentally different concepts.

The brands operating this way are posting real numbers. An A/B test comparing 25 creative variants against 5—same budget, same audience—produced 34% lower cost per acquisition and 171 more purchases, despite reaching 59,000 fewer people. Campaigns starting with 8 to 12 distinct concepts on Meta’s Advantage+ platform saw 40%+ CPA improvement over manual, low-volume controls. These brands aren’t spending more. They’re learning faster.

Why the Gap Compounds—and Why You Can't Close It With Budget Later

This is the part that matters most strategically.

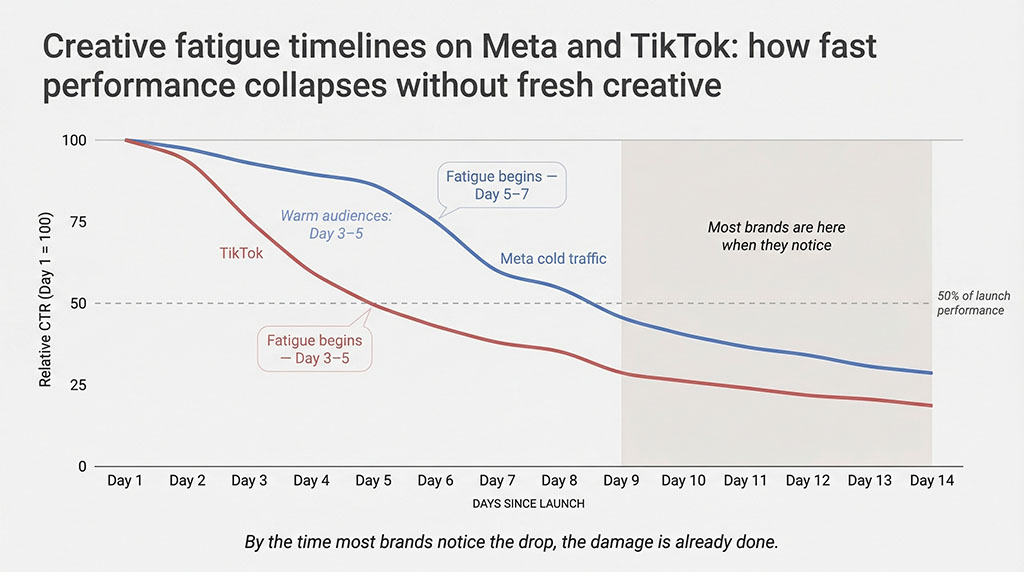

Think about two businesses in the same category. Brand A runs its campaigns the traditional way—five creative variants per month, most of them variations on an approved concept. Brand B builds a testing infrastructure that generates 50 variants per month, using AI for production and internal customer knowledge for strategy. In month one, their performance looks similar. The gap isn’t dramatic yet.

By month six, Brand B has run 300 tests. They know which visual hooks stop the scroll for their specific audience. They know whether their customer responds to problem-first or solution-first framing. They know that a UGC testimonial from a woman in her 40s outperforms a polished lifestyle video by 2.3x for their product. They’re not guessing anymore—they have data specific enough that it no longer generalizes to anyone else.

Brand A has run 30 tests. They know roughly as much as they did in month one.

BCG’s experience curve research—validated across industries from semiconductor manufacturing to automobiles—shows that error rates and costs decline 10 to 25% every time cumulative experience doubles. In advertising, this translates directly to creative hit rate: the percentage of tested variants that become scalable winners. Brand B’s hit rate improves monthly because each test is built on the last. Brand A’s hit rate stays flat.

By month eighteen, Brand B is operating on a plane of creative intelligence that budget alone cannot purchase. The only way to close a 300-test learning gap is to run 300 tests—and you can’t run 300 tests retroactively. The platforms need time to serve them, measure them, and feed the signals back into your decisions.

This is what separates a structural moat from a tactical advantage. You can outspend a competitor. You cannot out-learn them in reverse.

What This Actually Looks Like

This is not an argument for firing your agency or abandoning creative judgment. It’s an argument for restructuring where your production budget goes.

The shift has two components. First, collapse the per-unit production cost. AI tools handle static and video variation production at a fraction of traditional cost—meaning your existing production budget, redirected, can fund 10 times the number of tests. Second, invest the human creative energy where it can’t be automated: hypothesis generation. What messaging angles haven’t you tested? What emotional triggers have you assumed rather than verified? What does your customer believe about their problem that’s actually wrong, and what would happen if an ad said so directly?

The businesses winning right now are treating advertising the way software companies treat product development. Run the test. Read the data. Apply the learning. Build the next test on top of the last one. The bottleneck is no longer “can we build it.” It’s “what should we build next.” That second question is a human question. A founder question. It requires understanding your customer, your category, and your competitive position in a way no agency has by default—but you do.

Back to that campaign. $47,000. Six weeks. One execution. CTR at 0.4%.

Now run the same scenario with a different architecture. Same $47,000. Same quarter. But week one: fifteen variants, different hooks, different emotional angles, different formats. Some polished, some raw. Total production cost: under $3,000. By day ten, three are outperforming. By week three, you’ve doubled down on what works, cut what doesn’t, and you’re still generating data with three weeks left to go.

The creative isn’t beautiful. But it’s working.

And in six months, when a competitor tries to enter your market with a $50,000 agency budget and one beautifully produced campaign, they’ll find that you’ve already mapped this territory. You’ve already run the tests they’re about to run. You already know what their customers respond to—because they’re your customers now.

That’s not luck. That’s what a learning machine looks like from the outside.

Find out whether your creative testing rate is building an advantage

or burning budget

Most accounts we audit have the same problem: spend allocated before any data exists, creative variants that are really just one idea in three colorways, and no system for turning what works into what’s next.

Takes 30 minutes.